Causality Challenge #3: Cause-effect pairs

Register to our Google group causalitychallenge to keep informed!

Frequently Asked Questions

Contents

Setup

Tasks of the challenge

Data

Evaluation

Submissions

Rules

Help

Setup

What is the goal of the challenge?

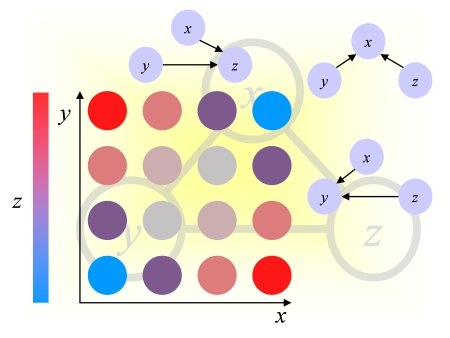

The goal is to devise a "coefficient of causation" between two variables A and B, given a number of samples, not time-ordered. The coefficient of causation will take values between -Inf and +Inf, large positive values meaning confidence that A is a cause of B (denoted A->B) and small negative values meaning confidence that B is a cause of A (denoted B->A). Values near zero will be interpreted as absence of causal relationship (either A and B are independent (denoted A|B) or A and B are the consequence of a common cause(denoted A-B)). Reciprocal causation (feed-back loop) is not considered.

Examples labeled with truth values (A->B, B->A, A-B, or A|B) are provided for training. The participants are then evaluated with predictions made on unlabeled validation and test data. On-line feed-back is provided during the development period using the validation data. The final ranking and prize attribution is done with the test data, released at the end of the development period.

Are there prizes and travel grant?

Yes, there will be free conference registrations, cash prizes and travel grants. See the Rewards section.

Will there be a workshop and proceedings?

Yes, we are planning to have 2 workshops, one at IJCNN 2013, August 4-9, 2013, Dallas, Texas and one at NIPS 2013, in december 2013 (pending acceptance). The papers will be published in JMLR W&CP, the proceedings track of JMLR, and the authors of best papers will be invited to submit a longer version to a special topic of JMLR on experimental design. The papers will be reprinted as a book in the CiML series of Microtome. For submission deadlines, see the updated schedule.

Are we obliged to attend the workshop(s) or publish our method(s) to participate in the challenge?

Entering the challenge is NOT conditioned on publishing code, methods or attending workshops, however:

(1) If you want to be part of the final ranking and become eligible for prizes, you must submit software allowing the organizers to reproduce your results before the deadline, see the updated schedule, following prescribed guidelines. The code submitted will be kept in confidence by the organizers and used only for validation purposes. You must also fill out a survey (fact sheet) with general information about your method.

(2) If you win and wish to accept your prize, you must make your code publicly available undera popular OSI-approved license, including the training code, and you must submit a 6-page paper to the proceedings. The paper will be peer-reviewed and must be revised to the satisfaction of the reviewers as a condition to obtain the prize.

Can I attend the workshop(s) if I do not participate in the challenge or if I do not qualify for prizes?

Yes. You are even encouraged to submit papers for presentation on the topics of the workshops.

Do I need to register to participate?

Yes. You can download the data and experiment with the samples code without registering. However, to make submissions you must register to the Kaggle website. We also ask you to join the causalitychallenge Google group to get challenge notifications and workshop announcements.

Can I register multiple times?

No. You must make submissions under a single Kaggle user ID. You may be part of a team. Requests may be sent to Kaggle to merge teams.

Tasks of the challenge

How do you define causality?

For the purpose of this challenge, we consider relationships between pairs of variables {A, B} usually representing aggregate statistics like "life expectancy" of a population or measurements like "temperature". A is a cause of B means that there is a mechanism to generate B from A in the form: B = f (A, noise). Hence there is no explicit time information involved in this definition, even though B "follows from A". You are given a number of pairs of values of A and B and your task is to guess whether B = f (A, noise) or A = f (B, noise) is a plausible model.

Why should we care about this problem?

Uncovering causal relationships is a central problem in science that affects nearly every aspect of our everyday life. What affects your health, the economy, climate changes, and which actions will have desired effects? Those are some of the questions addressed by causal dicovery.

Aren't there already standard statistical methods to address this problem?

The gold standard for evaluating potential causal relationships is the "randomized controlled experiment" in which the influence of a number of factors on a given outcome is tested by "controlling" (assigning given values) to the factors considered and repeating measurements to randomize over uncontrollable or unknown factors. However, conducting experiments is expensive (and sometimes unethical of impossible). Our goal is to limit the number of experiments needed and prioritize them using available "observational data" constantly collected and available at low cost.

What is the difference between observational and experimental data?

Observational data are data collected by an "external agent" not intervening on the system at hand. Experimental data are obtained when an external agent forces some of the variables of the system to assume given values. For example, assume that you are interested in studying the influence of smoking on lung cancer. You may conduct a survey on a sample of the world's population; this is an observational study. Alternatively, you may sample subjects at random and assign them a "treatment": half of the people are forbidden to smoke and half of the people are forced to smoke; this is an experimental study. In the observational study, correlations between smoking and lung cancer may not be revealing of a cause-effect relationship because their may be confounding factors. For example, there may be a genetic factor that makes people both crave for tobacco and increase their risk of lung cancer (common cause). In the experimental study, significant statistical dependencies are a strong suggestion of a causal relationship. Notice that preventing people from smoking may be an expensive experiment; forcing them to smoke would be unethical.

Aren't there better observational methods using more than 2 variables?

Using more than two variables, you can conduct conditional independence tests, which allow you to eventually draw stronger conclusions about causal relationships. However, such tests require a lot of data to reach statistical significance. For instance, if you have binary variables, every time you condition on a new variable, you need twice as much data to reach the same level of confidence. Furthermore, you need to assume that you know "all" the relevant variable in your system (causal sufficiency). So there is a lot of value to see how far we can go with only two variables.

Can this problem be solved at all?

The high AUC on the leaderboard after one month of competition is very promising. We checked that high scores are obtained both on real and artificial data among high ranking participants.

What applications do you have in mind?

Mining publicly available data in chemistry, climatology, ecology, economy, engineering, epidemiology, genomics, medicine, physics. and sociology, to identify previously unknown potential cause-effect relationships deserving attention.

Why are there no time series?

Using time poses different problems. We will introduce time series in an upcoming challenge.

Can I submit time series data in track 1?

Not for this challenge. The data need to be similar to the data provided by the organizers for the challenge.

Is there a minimum of data I need to submit in track 1?

No. However, we recommend to submit as many pairs as possible and at least 10. For each pair, there should preferably be more than 100 samples. In the data provided by the organizers, the pairs have between 500 and 5000 samples.

Can you give me clues on how to get started?

We provide sample code and papers in the Help section. There is also a powerpoint presentation with voice over.

What are typical methods?

A list of typical methods and their properties is found in the BMC genomics paper by Stanikov et al. Some examples of the following approaches are found in the powerpoint presentation:

- Additive noise models (ANM) and their derivatives like the post-non-linear models (PNL) that fit the data in two ways A=f(B,noise) and B=f(A,noise) and decide on the causal direction based on the goodness of fit, the independence of the residual (noise) and the input, and the complexity of the model. Under the assumptions of the models, cause-effect directions can be identified given enough samples if some condition are fulfilled like: the function is non invertible, the noise or the input is non Gaussian, or if the function in non-linear and there is some additive noise not necessarily non Gaussian. See slides 15-20.

- Latent variable models (e.g. LINGAM). All factors (variables) interacting with A or B are considered latent variables (not just "noise") and several models are fitted with maximum likelihood, under some constraints (e.g. the input and the latent variable are independent). The causal direction favored is that correponding to the model provising the best compromise fit/complexity. See slide 21.

- Information theoretic approaches (GPI and IGCI). The two factorizations of P(A, B) are compared P(A|B)P(B) and P(B|A)P(A). The least complex explanation of the data is favored. Equivalently the model corresponding to an independence between input distribution and mechanism to produce the output is favored. See slides 24-26.

Data

Are the datasets using real data?

Partially.

How did you determine the truth values?

For artificial pairs, they are given by the data generating process. For real pairs, we used the identity of the variables. Some variables are intrinsically "exogenous" and cannot be manipulated such as "age", so they are assumed to be causes. Some variables are known causes or known effects.

Are there categorical variables?

Yes. There are numerical, categorical, and binary variables.

Why did you include independent variables?

Arguably the problem of determining whether two variables are independent is not a "causal" problem because "correlation does not mean causation" or more generally two statistically dependent variables are not necessarily causally related (there many be, for instance, a common cause explaining the dependency). However, our goal is to find a "coefficient of causation" of two variables A and B with large positive values for A causes B, small negative values for B causes A, and values near zero for other cases. Those other cases include independent variables.

Determining independence is a well studied problem. There exist many statistics measuring independence. One of them is the well know Pearson correlation coefficient. However, this coefficient may be used only between two numerical variables and it does not capture all types of dependencies. The Hilbert-Schmidt independence criterion (HSIC) generalizes the Pearson correlation coefficient to complex non-linear dependencies using the "alignment" between two kernel matrices K=[k(xi, xj)] and L=[l(yi, yj)] (HSIC = sum of the element by element product of K and L or equivalently Tr(KL), for centered kernels). For one categorical and one numerical variable, you may use the ANOVA test or the Kruskal-Wallis test. For two categorical variables, you may use the Chi-squared test.

Why did you hide the identity of the variables?

We do not want people to make guesses based on the identity of the variables.

How easy would it be to identify the causal relationship by eyeballing the data?

For some pairs it is easy, but for most pairs it is hard or impossible.

Why did you release additional data?

We released supplementary data (SUP1-3) and final data (CEfinal) that addressed a data normalization and quantization problem introducing a potential bias for some of the causal classes. CEfinal contains a mix of real and artificial data. SUP1data and SUP2data are artificially generated. SUP3data include real pairs and semi-artificial A-B pairs. The supplementary data may be used as additional training data. Training is not limited to data provided.

How are the data normalized?

The variables are standardized (subtract mean and divide by standard deviation), multiplied by 10000, then rounded. The data formatting also ensures a similar distribution of the number of unique values of variables accross the various classes.

How is final test data distributed?

The final training, validation, and test data are distributed similarly and includes two types pairs of variables:

(1) artificial data generated in a similar way as those of SUP2data and (2) pairs of real variables from various sources. The final data is different from the original data release with respect to normalization and quantization of variables. See the data page for details.

Are the classes balanced?

There is a relatively balanced proportion on A->B, B->A, A|B, and A-B, but they are not exactly identical. The number of samples per variable pair is distributed similarly in all 4 classes. The quantization of variables is distributed similarly in all 4 classes in the supplementary and final data, NOT in the original TRAIN and VALID sets.

Evaluation

What is the meaning of the score on the leaderboard?

The score is the average of two AUCs rating the classification accuracy for separating "A->B" from all the other cases and for separating "B->A" from all the other cases. See the score definition.

Why did you not consider a four class problem?

Because it is practically attractive to have a coefficient of causation that can be used to rank pairs and prioritize experiments.

Doesn't the score chosen force us to solve a harder problem than needed?

Not necessarily. It imposes that the symmetry of the problem in A and B be exploited.

How can we compute a statistic satisfying your requirements?

One way is to create a score rating the likelihood of A->B and another rating B->A then make the difference between them. Another way is to extract features of the distribution of A and B values and train a classifier to separate A->B from B->A, leaving a margin on either side of decision boundary in which other cases (A|B and A-B) are forced to live. Because of the symmetry of the problem, you can get twice as many examples for A->B and B->A by swapping the roles of A and B.

Will the results on the validation set or on data provided by the participants count for the final ranking?

No. The final ranking will be computed only on test data provided by the organizers.

However, a number of statistics will be computed on all the data (including validation data and data provided by the participants) and you may report these results in your paper submitted to the workshop.

Submissions

How many submissions can I make?

You may not make more than 5 submissions per day. You may select up to three final submission.

Can I make experiments with mixed methods?

We encourage you to use a single unified methodology. However, we acknowledge that, since there are different types of variables (numerical, categorical, continuous), the problems are very different in nature and may require adjustments or changes in strategy, so we do not impose that you use strictly the same method for all cases.

Can I submit a method involving no learning at all?

Yes.

Can I submit results involving human classification?

No. This is a challenge about automatic machine-made predictions. However, if you want to provide human classification results, please contact us and we will report them for comparison.

How do I prepare the software that I will submit?

We provided instructions for preparing your code.

You must submit your software together with your selected submission(s) on validation data before the software submission deadline (see the updated schedule). If you intend to make more than one final submission (up to three are permitted), you must provide unambiguous instructions with your software so the organizers can match your software versions or options to the submissions made.

How do I submit final results?

After the software submission deadline, we will release the decryption key for the final test data. You will then have about a week to run your software and turn in prediction results (see the updated schedule). The prediction results must be generated with the software that you submitted.

How will the organizers carry out the verifications?

The burden of proof rests on the participants. Hence the participants are responsible for providing all necessary software components to the organizers so they can reproduce their results. Standard hardware and OS must be used (laptop or desktop computers running Windows, Linux or MacOS). The interface must be similar to that of the sample code. The participants are advised to provide a preliminary version of their code for testing ahead of the deadline to make sure that it runs smoothly.

The organizers will run your software on the final test data and compare the score obtained with the score of your final submission(s). You will be informed of any discrepancy and the organizers will attempt to troubleshoot the problem with you. However, if the difference alters the ranking of participants, the results obtained by the organizers using the software provided will prevail.

To ensure result reproducibility, we recommend that you eliminate any stochastic component from your software and, in particular, initialize pseudo-random number generators always in the same way to produce the exact same results every time the software it run.

Do I have to submit results on the final test set even though I provide my software?

Yes. This will allow us to verify that we ran your code properly.

Do I have to provide source code?

Not necessarily. Only the winners will be required to make their source code publicly available if they want to claim their prize.

Do I have to provide the training software or only the prediction software?

The participants need only to upload software to make predictions on the final validation and test data. Only the winners will be asked to make publicly available the source code of their full software package under a popular OSI-approved license, including training software, to be able to claim their prize.

Rules

The rules specify I will have to fill out a fact sheet to be part of the final ranking, do you have information about that fact sheet?

You will have to fill out a multiple choice question form that will be sent to you when the challenge is over. It will include high level questions about the method you used, software and hardware platform. Details or proprietary information may be withheld and the participants retain all intellectual property rights on their methods.

Will the information of the fact sheets be made public?

Yes.

Do I need to let you know what my method is?

Disclosing detailed information about your method is optional unless you are one of the winners and want to claim your prize. The winners will have to produce a 6-page paper for the proceedings.

To get my prize, does my paper need to be accepted?

Yes. Your paper will be peer reviewed and must be accepted. You will get the opportunity of revising it to the satisfaction of the reviewers.

How will papers be judged?

The reviewers will use five criteria: (1) Impact (track 1) or Performance in challenge (track 1), (2) Novelty/Originality, (3) Sanity, (4) Insight, and (5) Clarity of presentation. In track 1 (data donation), "Impact" means the scientific, technical, and/or societal impact of the cause-effect relationships discovered through the challenge (preferably validated a posteriori). In track 2 (submission of methods), "Performance" means verified final challenge performance results on test data (see reproducibility).

How will the best paper awards be attributed?

Best paper awards will distinguish

papers with principled, original, and effective methods, and with a clear demonstration of the advantages of the method via theoretical derivations and well designed experiments.

Can prizes be cumulated?

Yes, you can enter both track and cumulate your prizes if you with in both tracks. You can also cumulate you prize in track 2 and a best paper award.

Will the organizers enter the competition?

The prize winners may not be challenge organizers.

The challenge organizers will enter benchmark submissions from time

to time to stimulate participation, but these do not count as competition entries.

Can I give an arbitrary hard time to the organizers?

ALL INFORMATION, SOFTWARE, DOCUMENTATION,

AND DATA ARE PROVIDED "AS-IS". ISABELLE GUYON, CHALEARN, KAGGLE AND/OR OTHER ORGANIZERS

DISCLAIM ANY EXPRESSED OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED

TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR ANY PARTICULAR

PURPOSE, AND THE WARRANTY OF NON-INFRIGEMENT OF ANY THIRD PARTY'S INTELLECTUAL

PROPERTY RIGHTS. IN NO EVENT SHALL ISABELLE GUYON AND/OR OTHER ORGANIZERS

BE LIABLE FOR ANY SPECIAL, INDIRECT OR CONSEQUENTIAL DAMAGES OR ANY

DAMAGES WHATSOEVER ARISING OUT OF OR IN CONNECTION WITH THE USE OR PERFORMANCE

OF SOFTWARE, DOCUMENTS, MATERIALS, PUBLICATIONS, OR INFORMATION MADE AVAILABLE

FOR THE CHALLENGE.

In case of dispute about prize attribution or possible exclusion from the competition, the participants agree not to take any legal action against the organizers, CHALEARN, KAGGLE, or data donors. Decisions can be appealed by submitting a letter to Vincent Lemaire, secretary of ChaLearn, and disputes will be resolved by the board of ChaLearn.

Further help

Help for may be obtained by writing to the Kaggle website forum or emailing the organizers to causality@chalearn.org. See also the Help page.

Last updated August 8, 2013.